There are many surveillance situations that offer difficult lighting conditions. One particularly challenging situation is when there is a lot of variation in light levels simultaneously within a scene, referred to as wide dynamic range (WDR) scenes. Typical situations include:

* Surveillance of entrance doors with daylight outside and a darker indoor environment. This is, for example, very common in retail and office applications.

* Vehicles entering a parking garage or tunnel, also with daylight outside and low light levels indoors.

* In city surveillance, transportation, perimeter surveillance and other outdoor applications, where parts of the scene are in direct sunlight and other parts are in deep shadows.

* Vehicles with strong headlights, driving directly towards the camera.

* Environments with lots of reflected light, for example, in office buildings with many windows or in shopping malls.

It is obviously a user requirement to be able to reliably identify persons, objects, vehicles and activities in situations where there is a wide range of lighting conditions. Hence, there is a need for security cameras that are capable of detecting items in both dark and extremely bright areas.

Wide dynamic range imaging is a method used to produce images that try to recreate the full scene content in scenes that have a dynamic range that cannot be captured by standard cameras in one image. This white paper explains why standard cameras struggle with WDR scenes and how good WDR performance can be achieved in a video surveillance camera.

Background

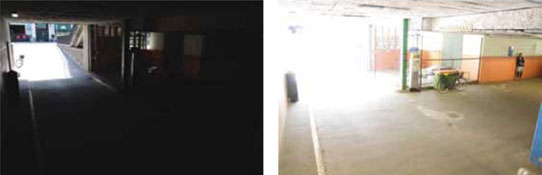

Standard surveillance cameras (without WDR) encounter difficulties in scenes with great variation in light levels. Below we can see an example of such a scene taken with two different exposures using a standard camera. Each of them is captured in a way that either makes the dark part visible, thereby burning out the bright areas, as shown to the left, or makes the bright part visible, thereby losing details in the dark areas, as shown to the right.

Clearly both images lack information from the full scene. Figures 3 and 4 have insets showing data from the short exposure image in the long exposure image and vice versa. A good WDR surveillance camera can – in one image – capture both these two extremes, ie, clearly show details both in the well-lit entrance, and the dark shadows inside the parking garage. Expressed differently, WDR techniques facilitate the ability to assimilate information from all light levels in the scene at the same time.

In principle, it is possible to extract some information from the dark areas (Figure 5), but we can clearly see the limitations of the modest dynamic range image of a standard camera when compared with details provided by using the brighter image or a camera with WDR capability (Figure 6).

Challenges

Light, pixels and dynamic range

Light is made up of discrete bundles of energy called photons. The more intense the light, the higher the number of photons per second are illuminating a scene. A camera can detect reflected photons from a scene, but it cannot detect all the available photons. One limitation is that a camera can only cope with a limited number of photons per exposure interval. Thus a camera image is just an estimation of a scene.

Cameras usually accumulate photons for a limited time, called the exposure time. The maximum length of the exposure time is limited by the frame rate – if the camera is a video camera. This maximum exposure time might be both too long and too short simultaneously for the same image, since both bright and dark areas might be present in the image. The correct exposure time for a pixel is one that maximises the signal-to-noise ratio (SNR), and therefore, it is shorter for pixels located in brighter parts of the image than for pixels in darker regions.

The pixel size in a digital camera affects the dynamic range of the camera. Dynamic range is defined at pixel level to be the maximum signal divided by the noise floor. The noise floor is a combination of many noise sources within the sensor. The analogue-to-digital converter is another component that may limit the dynamic range.

Bit depth

The bit depth depicts the number of bits used to ‘capture’ the information in one pixel. Typically, security cameras will have a bit depth of 10 bits. A higher bit depth, for example 12 bits instead of 10 bits, would theoretically increase the number of levels that can be detected, but in reality it will increase the image quality only if the sensor data is good enough. If the sensor data is noisy, there is not much to win by increasing the number of bits. Increasing meaningful bit depth above 12 bits is generally a very costly exercise both from a component cost perspective and from a complexity perspective, ie, introducing more electrical and advanced components and functions.

It is also important to keep in mind that the display in front of the security manager normally only has a bit depth of 8 bits/colour channel to show the video images. This means that the algorithm to translate from 10 bits in the sensor to the 8 bits in the display is critical in achieving good WDR performance.

Noise

When designing WDR cameras – or for that matter, any camera – noise is a major challenge. Digital images are subject to a wide variety of noise types, and the noise results in pixel values that do not reflect the true intensities of the real scene. There are several different kinds of noise that might be present in an image, depending on how the image is created. Even with a perfect camera, there will be noise. This is because photon shot noise is due to the nature of light itself. A camera can compensate for the noise in the image, but doing so can result in different types of artifacts (see below).

Extending the dynamic range to achieve WDR

There are a number of ways to improve the dynamic range of a camera without improving the bit depth of the pixels, which is an expensive task as described above. So this means sacrificing something else.

One way to achieve WDR is to use different exposure times for different pixels. This can be implemented in various ways. One way is simply to combine two or more full frame images obtained with different exposure times. This means combining signal in shadows from one image with signal from highlights in another.

Multiple exposure times, as they are often implemented, will however affect imaging of moving targets since the motion blur and object location will be different for objects with different brightness. A moving black and white football will, for example, be more smeared in its darker parts than in its brighter parts and will appear to be at two different locations. This is one example of artifacts in the image. See the next section for more information about artifacts.

Regardless of how the dynamic range is extended, there will be a price to pay, whether in terms of money since sensors or processors become prohibitively expensive, or in terms of image quality, as various image artifacts are introduced.

Artifacts

When using information from WDR images, the slightest change in the scene can generate artifacts, which dramatically limit the potential of the solution. A number of different artifacts may exist in a WDR image, some more common than others.

Motion

Motion blur can occur when the image being recorded changes during the recording of a single frame, either due to rapid movement or if the exposure time is too long versus the movement in the scene.

Artificial illumination

A related artifact comes from certain modulated light sources like fluorescent lighting. Such light is a challenge for all cameras since the camera is normally assuming a constant illumination. Depending on the camera type, artifacts like stripes and visible pulsing might be present. In WDR cameras, these artifacts might look somewhat different due to the utilised capturing techniques.

Visualisation

Another visible artifact that might occur is that noise may appear in unexpected places. One typical example is that a smooth area like a wall with only slight illumination differences might have areas with visible noise. This might be aesthetically unpleasing, but is generally a small price to pay for a much extended dynamic range.

Image capture

A WDR image might also look different due to the fact that the image is now so rich in reproduced tones that it is difficult to display it on a standard screen. The goal is to show as much detail in all shadows and highlights as possible and thus mimic what the human visual system is doing when the focus is moved from one object to another object. This is sometimes also referred to as ‘cartooning’.

Ghosting

Multiple exposure times will affect imaging of moving targets since the motion blur will be different for objects with different brightness. A moving object will, for example, be more smeared in its darker parts than in its brighter areas and thereby generate ghosting.

Applications and needs

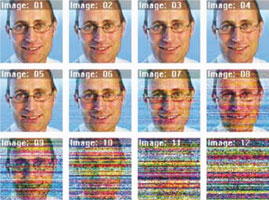

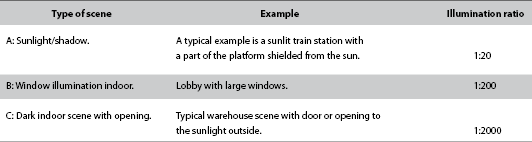

Not all applications require WDR. Using a low dynamic range camera that is configured to avoid clipping the highlights, the following image examples show what happens when a person is moved into darker and darker shade. Which image is still acceptable?

If your scene is closest to the type A in Table 1, and you answered image 6 or higher to the question, then a standard camera should be able to cover your needs. If your scene is closest to type B and you answered image 9 or higher, then a standard camera is still OK to use. If your scene is closest to the very difficult type C, you must be able to accept image 12 or higher to be able to use a standard camera. This gives you an indication of when WDR technology must be used. It is only an indication since standard cameras may, of course, vary in quality.

Measuring dynamic range

In many cases, the dynamic range capability of a camera is presented in dB units. It is a way to describe how well the camera can cope with difficult scenes containing both very bright and very dark objects. The dB unit is a measure of a ratio, namely the ratio of the radiance of the brightest and the dimmest object that can be captured by the camera. Note that this is not the same as the illumination ratio, which was used in Table 1. If the ratio is 1000:1, the dB value is 60 dB. The value is calculated as the logarithm of the ratio (in this case, 3) times 20.

The dimmest detectable level can be defined as the noise floor of the sensor pixel, since any signal below this level is drowned in noise. With this definition, a good image sensor can reach a dynamic range of about 70 dB. Some special sensors can extend this to readings above 100 dB, since they can increase the upper detection limit using techniques such as multi-frame exposures. This will extend the dynamic range of the sensor, but that will not necessarily always be the best solution to achieve WDR.

Some modern surveillance cameras use sensors with extended dynamic range, which allow them to better handle difficult scenes. The dB number cannot, however, fully describe the WDR capacity. To fully appreciate the new capabilities of the cameras, it is best to test them.

When comparing product datasheets from different camera manufacturers, it is important to know that the decibel unit is just an approximation of the dynamical capabilities of the camera. Axis is normally modest in the given ratios since a high quality WDR image also depends on the level of artifacts and the quality of the processing. Therefore, do not be surprised if an Axis camera outperforms a competing camera even if the given ratio is slightly lower.

Figure 8 shows two other examples of pictures taken from a scene with wide dynamic range. The first picture was taken with a camera with a high dB value, and the second picture was taken with a camera with a low dB value.

Which one is the best from a video surveillance perspective?

Axis’ solution for wide dynamic range

In 2011, Axis introduced its improved WDR technology, called Wide Dynamic Range-dynamic capture. WDR-dynamic capture uses the latest developments in sensor technology, image processing and Axis’ unique competence to build network cameras with WDR performance optimised for security and surveillance requirements.

Figure 9 is an image of a parking garage scene taken with an Axis camera that incorporates WDR-dynamic capture technology. The camera has captured the very high dynamic range of the scene, and using advanced image processing, the camera has further adapted the image to the modest dynamic range of a standard display screen.

Image results from a WDR camera will differ depending on such aspects as the complexity of the scene and amount of movement. As with any video surveillance situation, the most important question is: what do you want to see? And, how do you want to present the captured image?

Conclusion

There are many surveillance situations where there is great variation in light levels within the scene. This presents a technical challenge for security cameras, and it is difficult to achieve good WDR performance without introducing image artifacts.

Axis’ latest solution is Wide Dynamic Range-dynamic capture, which is a technology optimised for surveillance applications, and which is available in AXIS Q1604 Network Cameras.

Measuring how well a camera handles dynamic ranges in a scene is difficult, and the units typically used on product datasheets (such as dB) are not a reliable indication of actual WDR performance. As always, Axis recommends testing the cameras in the real environment before making a decision.

Useful links

For more information, see the following links:

* Axis Communications – ‘In the best of light – The challenges of minimum illumination’, www.axis.com/files/whitepaper/wp_light_sensitivity_41137_en_1011_lo.pdf

* Axis Communications – ‘CCD and CMOS sensor technology’, www.axis.com/files/whitepaper/wp_ccd_cmos_40722_en_1010_lo.pdf

* Axis Communications – ‘Lighting for network video – Lighting design guide’, www.axis.com/files/whitepaper/wp_lighting_for_netvid_41222_en_1012_lo.pdf

| Tel: | +27 11 548 6780 |

| Email: | marcel.bruyns@axis.com |

| www: | www.axis.com |

| Articles: | More information and articles about Axis Communications SA |

© Technews Publishing (Pty) Ltd. | All Rights Reserved.