AI, deep learning, intelligent systems are words that are becoming part of the language used in CCTV and security systems, borrowed from the wealth of development over the past few years in the development of supercomputers and human/AI matches in games like chess and Go.

Recently this kind of development was taken into the military space with simulated dogfights using F-16 fighter jets in a VR simulated and controlled environment. In this AlphaDogfight, AI pilot controlled jets were matched up against each other and ultimately against a genuine F-16 US Airforce human pilot in the final event. In the much discussed final, the AI controlled fighter won 5-0.

It seems a compelling argument for the implementation of AI-based systems and a reduction in the need for operators. However, a number of specialists, including fighter pilots themselves have pointed out a variety of issues. The foremost of these were the matches occurred in a limited virtual environment with a small number of factors to consider rather than in real life.

While programming to take into consideration the tactics of flying, aircraft capabilities and the environment would have been highly complex, it still can’t simulate the conditions of a real world dogfight, never mind actual broader operating conditions for airforce pilots. Part of this, as Colin Price who is a Navy F-16 pilot himself points out (see reference 1 below), was that the AI had perfect information at all times and there were no issues relating to rules of engagement in complex real world settings.

Will Knight notes in a Wired article (see reference 2 below), the simulated environment is so much simpler and there is still much to be said for a human pilot’s ability to understand context and apply common sense when faced with a new challenge.

The reality of an AI-enhanced future

The AI in the dogfights showed some key success factors in helping the AI pilots win, including that there was no need to limit responses to human capacity such as G force tolerance or self-preservation, enabling the AI to manoeuvre in ways impossible to a human pilot. Similarly, the ability to take split second opportunity was used by the AI as an advantage, which a human would find difficult to match.

The exercise proved that AI is going to be a major influence in the future. More related to our worlds, the kinds of abilities like always being on an alert and working constantly with instantaneous reactions have similar advantages with electronic systems in CCTV and security.

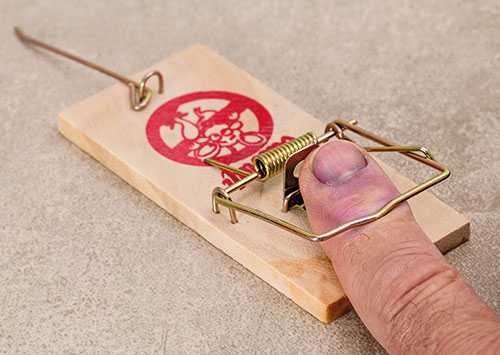

Suppliers of technology who claim or actually have working AI or intelligent systems use these in their marketing approaches. Certainly some of the claims of performance benefits are valid and we are going to see far more of these features in the industry. However, those on the cutting edge can hurt themselves badly if things go wrong, especially the new users. So what are the lessons for security and CCTV that can be learned from the success and shortfalls of this new ‘intelligent’ technology and software?

Service delivery is key

I emphasise the importance of service delivery in all my consulting and training – the input has to lead to a benefit for the client. When it comes to AI or any kind of new technology, the first question a user should be asking is, “Does it work in the real world?” and more specifically, “Does it work in my world?”

Despite the claims of sales people and referrals to other sites, trial testing the technology in your environment may throw up many issues. Use the opportunity to run a trial period before committing and make sure you don’t bear the cost if it fails. There have to be some benefits to being a test or beta user. If the technology can use the test to improve and solve the issues, so much the better.

In addition, I find one of the most critical issues with sales of AI systems is that one aspect of it may be truly intelligent and automated, but this is inferred to all the other features as well, that don’t have this capability. Suddenly you are going to need an operator physically analysing video and typing out search criteria on people or vehicles rather than the software doing it automatically as initially claimed.

Thirdly, check out the false alarm rates and whether these are going to impact on service delivery – see ‘The consequences of false alerts’ article referenced below on implications of this for performance.

People tend to be captivated by technology, whether it is a new gadget, the latest phone, ways of enhancing the rugby game performance reviews or whether the football is crossing the goal line. Birthday and Christmas presents are viewed with great anticipation, tried out once and placed in the cupboard for the rest of eternity. So the question is whether the new technology is providing a real solution to a problem being experienced and is it going to solve the problem.

In some cases, a technology may look really good, but doesn’t actually contribute to the desired outcome. I’ve seen one case where one sophisticated feature in the package wowed decision makers and lead to a decision to purchase that cost millions, but actually wasn’t aimed at the purpose of the product delivery and expected outcomes. The Wow Factor of just one element can blast away all objective thinking from the whole package.

Keep your focus and your feet on the ground when evaluating the technology. Ask if it can be accomplished in another way, or do you really need it and is it worth the cost. If it fulfils all of these issues and contributes to service delivery, then everybody wins.

Where did it go to school?

Is there going to be a need to make use of AI machine learning or self-learning capabilities for the technology to work properly? The technology may have ‘learned’ in a particular setting or for a specific situation, but needs to relearn for your situation. Faced with a novel situation, the chance for a correct response from an ‘intelligent’ system becomes far more variable. The learning is only as good as the inputs, the capacity of the system to use these and the person behind the system who wrote the initial software and the team to support it.

In the AlphaDogfight scenario, there were teams of people preparing, evaluating performance and tweaking the AI through the whole process. Where learning incidents that the AI can use are relatively rare, the learning process can take a lot of time, especially if there are a range of diverse activities that need to be catered for. Teaching, or ‘learning’ the AI can be incredibly time demanding so who is going to be responsible for making sure it learns the right things on your site and how much time is this going to take away from what people should be doing in their jobs.

Unfortunately, the people who are going to install your ‘self-learning’ security systems are not going to be hanging around like they do when F-16s are the issue. If it is a self-learning system, how will you know it is learning the right things and by when can you expect it to be operating at the expected level. Responsibility for this machine learning needs to be allocated up front and the extent of operating capability needs to be tied to payment for the service delivery effect.

Other questions to consider

Is the technology going to require special skills that don’t exist within the existing control room? How easy and expensive is it to acquire these skills and what implications may it have for control room design and interfaces? If you are using standard security guard level personnel for control room operator functions with high levels of technology, you are going to lose much of any benefit of any sophisticated technology or your control room is going to fail.

How do you manage the ‘knowledge base’ built up over time with existing personnel if they are no longer suited to the new technology. There was a lot of talk about drone usage within control rooms, yet the real life experience of needing specialised drone pilots has almost crippled the potential of this technology for many potential small users. For bigger users with a cost structure and operational need that can support the technology, it still needs to be integrated into the control room systems if it is to work effectively.

How is the technology going to affect the workload of the control room and can it be accommodated by the number of people and the type of current personnel? Will the technology require its workstations, how can it be integrated into the existing systems and where will it be positioned? Is it expected to contribute to the existing decision making processes, or will it be a standalone? These fundamental questions affect whether the benefits can be gained from the new technology, whether it can be integrated effectively and the impact on management decision making and awareness.

What are the costs of maintenance? My belief is increasingly that maintenance costs need to be built into any contracts on new technology. I’ve seen various high-tech projects resulting in failure days or months after installation because the conditions or equipment couldn’t be serviced or maintained, the equipment wasn’t robust enough, or because the budget for maintenance wasn’t enough.

By making the supplier take responsibility for maintenance, you also keep them more honest. If they don’t want the role, then what are they worried about? I’ve also seen products worth tens of millions standing unused because of design factors that limit their use (even the building restrictions in which they were installed), or because there was nobody to maintain them.

Who is the decider?

Who or what makes the decisions from information or conditions generated by AI systems? Effectively this means who takes responsibility for decisions including automated decisions within the system. What is communicated and to whom? How do the standard procedures change? Who is the point of contact if the AI system fails and what is the impact on the remaining systems.

It will take exceptionally brave users to allow decisions that affect the welfare of people or impact on human life to be taken by the AI systems. Will Knight writes in his Wired article that US military leaders – and the organisers of the AlphaDogfight contest – say they have no desire to let machines make life-and-death decisions on the battlefield. He points out that the Pentagon has long resisted giving automated systems the ability to decide when to fire on a target independent of human control and a Department of Defence Directive explicitly requires human oversight of autonomous weapons systems.

It is not only users who will need to be careful about decision-making based on AI systems. Clients should ensure that technology providers hold or share liability for decisions that their systems make, whether classifying a particular condition where that information gets used, or from initiating action based on the AI processing and decision making. Even face recognition and number plate recognition systems will need to be mediated by a human verifier if severe public consequences are to be avoided. With failure also comes a crisis in confidence of the technology itself and that can mean a loss of something that could have turned out to be truly useful if handled more effectively.

In the conclusion of the dogfight article, US Navy pilot Colin Price notes on the impact of the AI technology, “So, if tomorrow my seven-year-old daughter decides she wants to become a naval aviator, I am not going to shoot down the notion and go on a rant about the last generation of fighter pilots. I know there will be a Navy jet for her to fly. My future grandchildren, however?”

He also comments that the plane has been built around a person, but there is already substantial technology in the plane that affects what he can do and even verifies his decision-making. This theme of ‘augmentation’ of the human using technology rather than replacement is still going to be around for a while and at present still presents the best solution.

The challenge for both users and service providers will be to come up with the best and most valid approach to using a seductive range of technologies to provide service delivery in the real world and for the meantime, it’s still going to be delivered by competent people.

References

[1]Colin Price. Navy F/A-18 Squadron Commander’s Take On AI Repeatedly Beating Real Pilot In Dogfight. TheDrive, 24 August, 2020. https://www.thedrive.com/the-war-zone/35947/navy-f-a-18-squadron-commanders-take-on-ai-repeatedly-beating-real-pilot-in-dogfight

[2]Will Knight. A Dogfight Renews Concerns About AI’s Lethal Potential, Wired, 08.25.2020. https://www.wired.com/story/dogfight-renews-concerns-ai-lethal-potential/

[3]Craig Donald. The consequences of false alerts. Hi-Tech Security Solutions, May, 2019. https://www.securitysa.com/9445a

Dr Craig Donald is a human factors specialist in security and CCTV. He is a director of Leaderware which provides instruments for the selection of CCTV operators, X-ray screeners and other security personnel in major operations around the world. He also runs CCTV Surveillance Skills and Body Language, and Advanced Surveillance Body Language courses for CCTV operators, supervisors and managers internationally, and consults on CCTV management.

| Email: | sales@leaderware.com |

| www: | www.leaderware.com |

| Articles: | More information and articles about Leaderware |

© Technews Publishing (Pty) Ltd. | All Rights Reserved.